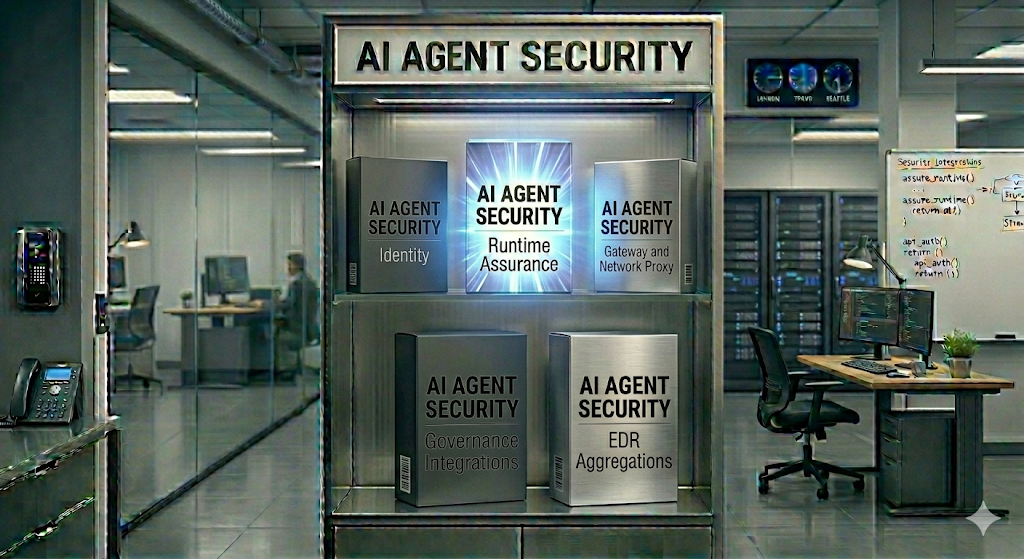

Not All AI Agent Security Solutions Are the Same

The Certiv team just returned from RSA 2026 in San Francisco, where Certiv emerged from stealth and introduced Runtime Assurance for AI Agents to the security community. One thing was immediately clear from the conversations we had throughout the week, across meetings, dinners, and demos: there is no shortage of vendors claiming visibility and control over AI agents. But architecturally, these solutions diverge in ways that have real consequences for what you can actually see and stop.

Many of these tools serve important functions in the security stack. The question isn’t whether they have value. It’s whether they’re sufficient on their own to secure a new class of autonomous, non-deterministic software operating across your environment.

Here’s what we observed.

Identity-Based Approaches: Per-Action Token Gating

Some solutions extend traditional IAM patterns into the agentic world, issuing scoped tokens on a per-action basis. Every tool call or API request requires an authorization check before execution.

Identity is foundational. You need to know who is acting, and token-level telemetry can surface patterns in how an agent uses its permissions. But what it can’t tell you is why the agent is requesting that authorization in the first place. Was the action part of a legitimate workflow, or did a prompt injection redirect the agent’s objective mid-session? Is the agent chaining tool calls toward an unintended outcome, or exfiltrating data through channels it’s fully authorized to use? Identity tells you what’s allowed. It doesn’t tell you what should be happening.

And as we see the ongoing “battle” between team MCP and team CLI when it comes to agentic tooling. The utilization of identity-based approaches for local on-device CLI calls will become challenging to control at scale, especially if more privileged accounts also exist on the same endpoint.

Governance Integrations: Platform-Level Policy Enforcement

Another category integrates directly into AI orchestration frameworks, hooking into LangChain, CrewAI, or proprietary agent platforms to enforce policies at the API boundary.

Governance at the platform level is valuable, especially for teams standardizing on a single framework. But these tools only see what the orchestration layer exposes, and they require the agent or application to be configured to use them. Agents running outside the instrumented framework, making direct system calls, or interacting with local resources are invisible. If an employee spins up an agent that hasn’t been integrated, or uses a tool that bypasses the governance layer entirely, you have no coverage. In heterogeneous environments, those gaps compound as agent adoption grows.

Gateway and Network Proxy Approaches: Controlling the Pipe

Another category positions itself at the network layer, routing agent traffic through a gateway or proxy that inspects and filters requests before they reach external services.

Gateways are well-suited for monitoring and filtering outbound traffic. But agents don’t just make outbound API calls. They read local files, execute shell commands, interact with local databases, call MCP servers, and chain together multi-step workflows that never touch the network. A gateway only sees what crosses the wire. An agent writing sensitive data to a local file, spawning a subprocess, or reasoning its way into an unintended action path is invisible to a network proxy.

There’s also a practical constraint: gateways assume you can funnel all agent traffic through a single chokepoint. In reality, agents operate across multiple surfaces, local and remote, on-machine and in-browser, through direct API calls and tool abstractions like MCP. No single network chokepoint covers all of those paths.

EDR Aggregation: Repackaging Endpoint Telemetry

This is where the distinction gets critical, because on the surface this approach sounds the most similar to what Certiv does. Some solutions aggregate telemetry from existing EDR tools, attempting to reconstruct agent behavior from process-level and network-level signals. Both involve the endpoint. But the similarities end there.

EDR is essential infrastructure. It’s purpose-built to detect malicious processes, lateral movement, and known attack patterns at the process and syscall level. That’s the right abstraction for detecting malware. It’s the wrong abstraction for understanding AI agents.

What EDR wasn’t designed to capture:

- Semantic context. EDR sees that a process made an HTTPS request. It doesn’t know that an AI agent decided to send your customer database to an external API because a prompt injection altered its objective mid-session.

- Agent intent and reasoning. EDR has no concept of tool calls, chain-of-thought, or agent decision graphs. It sees network I/O, not the why behind it.

- Session-level behavior. Agent sessions span multiple tool invocations, context windows, and decision points. EDR sees discrete events with no model for correlating these into a coherent session.

- Cross-framework activity. EDR doesn’t differentiate between an agent running on AutoGen versus CrewAI versus a custom MCP implementation. It sees them all as the same process making network calls.

Solutions that aggregate EDR data inherit these limitations. You can’t extract agentic context from telemetry that was never designed to capture it.

The Missing Layer

Each of these approaches covers a real part of the problem. Identity manages access. Governance enforces platform-level rules. Gateways filter network traffic. EDR detects threats at the process level. But they share a common gap:

-

No semantic understanding. None of them understand agent intent. Without that, you can’t distinguish a legitimate workflow from a dangerous one.

-

No pre-execution enforcement at the agent level. Detection happens after the fact. Logs record what went wrong. Alerts confirm damage. Nothing stops a risky agent action before it executes.

These tools aren’t the problem. The missing layer is.

Where Certiv Fits

Certiv doesn’t replace these tools. It fills the gap between them.

We built for the endpoint because that’s where the work happens. Claude Code, Cursor, GitHub Copilot, Codex, and OpenClaw are executing directly on endpoints, reading local files, running shell commands, calling MCP servers, and interacting with production systems. And it’s not just developers. Knowledge workers are adopting tools like Claude Cowork and Perplexity Computer to automate everyday workflows. Often without security teams even knowing they’re there.

Certiv’s Runtime Assurance Layer instruments at the execution layer, below the application and above the OS, intercepting agent behavior at the point where decisions become actions. Full semantic visibility. Pre-execution enforcement. Intent-based policy. Across every agent, every framework, every endpoint.

To learn how it works, visit certiv.ai/approach.

The Bottom Line

Your security stack works. It just wasn’t built for agents.

Identity manages who. Governance manages where. Gateways manage what crosses the wire. EDR manages what runs on the machine. But right now, no one is managing what the agent is actually doing, why it’s doing it, or what it’s about to do next.

And your employees aren’t waiting. They’re already running agents on their machines today, with access to source code, credentials, customer data, and production systems. Every day without visibility into that layer is a day you’re trusting software you can’t see to make decisions you can’t control.

That’s the layer Certiv was built for. That’s Runtime Assurance.

Book a demo and see what’s already running in your environment.

— Tai, VP of Product, Certiv